Why System Design Is Becoming a Strategic Priority

In one enterprise setup, eight learning platforms were active across functions. Each had a clear purpose when it was introduced. Compliance, onboarding, leadership development, and external certifications. Nothing appeared unnecessary at the time.

A year later, reporting teams were still combining data manually across multiple systems. Not because integrations were missing, but because those systems were never expected to align in a shared structure.

That gap does not show up early. It builds slowly, usually after expectations begin to shift at the leadership level.

Why Procurement-Led EdTech Decisions Do Not Hold Up at Scale

At the start, procurement feels structured. A requirement is defined, vendors are evaluated, and a platform is selected based on immediate needs. The process works, and it is easy to justify.

The issue begins when those decisions start stacking over time.

In one organization, a learning experience platform was introduced to improve engagement. It worked well. Adoption has increased. But the existing LMS continued to manage compliance and reporting. Over time, both systems started tracking similar data, but in slightly different ways.

No one had planned for that overlap. It developed gradually and only became visible when reporting expectations increased.

Some systems begin to drift apart in subtle ways. Data starts looking similar but behaves differently. Integration exists, but only at a surface level. And ownership is rarely clear when something breaks.

You start to see patterns like:

- A tool solving a local problem but creating a dependency elsewhere

- Reporting logic being rebuilt multiple times across teams

Then there are cases where no one is quite sure which system owns the final version of data. That is usually when manual reconciliation begins to increase.

At first, none of this felt critical. But over time, the effort behind the system becomes an actual issue.

That is where the shift begins. Quietly. From tools to structure.

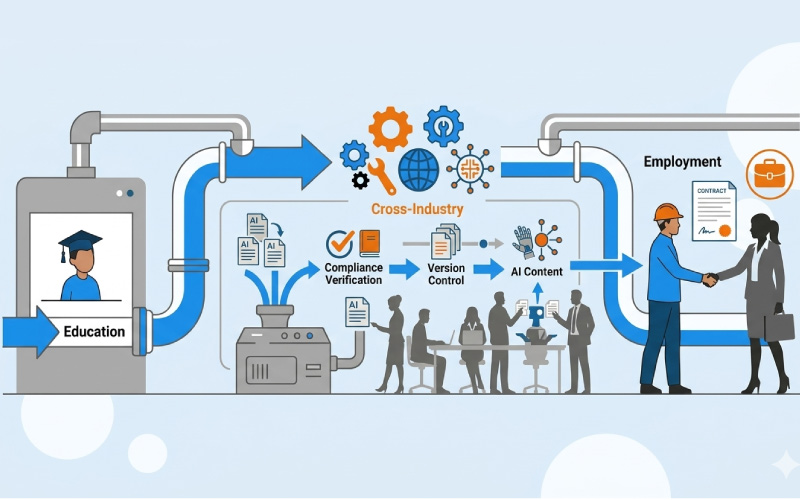

What Changes When Learning Systems Are Designed as an Ecosystem

Once the limits of isolated decisions become visible, the conversation moves away from tools. It starts focusing on how systems behave together over time.

This is where edtech ecosystem architecture becomes relevant, not as a framework to adopt, but as a way to think through decisions before they accumulate.

In one university network, leadership paused procurement for a short period. Not to reduce spending, but to understand how existing systems interacted. They mapped data movement. Identified overlaps. Looked at where delays were coming from.

The outcome was not dramatic. No major system replacement. But the clarity changed how future decisions were made.

A few things started guiding discussions more consistently:

- Learner identity was treated as a shared layer, not system-specific

- Reporting logic was centralized instead of distributed

- Integration was defined before vendor selection, not after

There were also decisions that did not follow a clear pattern. Some systems were left as they were, simply because changing them would have created more disruption than value.

That is often how ecosystem thinking develops. Not through uniform changes, but through selective alignment.

And once that alignment begins, the pressure shifts toward data behaving consistently across systems.

Why Data Interoperability Becomes the Real Constraint in Digital Learning Systems

Integration often gives a sense of completion. Systems connect. Dashboards populate. Data moves.

But alignment shows up later.

In one enterprise setup, two systems reported completion data for the same learners. The numbers were close enough to pass a casual review. Not close enough for leadership reporting.

The issue was not technical. It was definitional.

Completion meant different things in different systems.

This kind of problem does not stay isolated. It spreads into reporting, decision-making, and eventually governance.

Some inconsistencies are easy to spot. Others take time:

- Learner IDs that look identical but are structured differently

- Time-based data that reflects different reporting cycles

And then there are issues that only show up under pressure. Audit requests. Cross-system reporting. Leadership reviews.

In one case, aligning just one data layer, learner identity, reduced discrepancies across multiple reports. It did not solve everything. But it made inconsistencies visible.

That visibility changes how systems are managed. Because once data starts aligning, gaps become harder to ignore.

And that is usually where governance enters, not as a choice, but as a requirement.

How Learning Platform Governance Starts Influencing System Behavior

Governance rarely starts early. It is usually introduced after systems begin to show strain.

At that point, it works as a layer on top of existing complexity.

In one organization, three teams managed three platforms. Each had its own way of handling users, content, and reporting. Governance tried to standardize this.

It worked in parts. Not fully.

Where Governance Begins to Break Down

- Ownership is defined, but overlaps continue

- Processes exist, but systems behave differently

Where Governance Starts Stabilizing Systems

- Decisions are tied to system roles, not teams

- Data ownership is assigned clearly

- Changes follow a defined path

Governance, in this context, is not about enforcing control. It is about reducing variation in where it matters.

Once that begins to settle, cost patterns start becoming easier to understand.

Why Total Cost of Ownership Expands Beyond Procurement Costs

Procurement costs are easy to track. Licensing, implementation, vendor agreements.

What happens after that is less visible.

Costs start appearing in smaller layers. Integration fixes. Reporting adjustments. Time spent aligning systems.

In one case, removing a redundant platform reduced costs slightly. But the bigger impact came from reduced reporting efforts. Teams spent less time reconciling data.

Not all cost patterns are obvious:

- Some systems remain active because replacing them would disrupt dependencies

- Support costs increase as systems grow more interconnected

- External expertise is brought in to maintain alignment

There are also costs that are not labeled as costs. Time delays. Decision lag. Rework.

These do not appear in budgets. But they shape how effective the system actually is.

And over time, cost starts linking directly with risk.

How Risk and Compliance Oversight Become More Complex in Fragmented Systems

Compliance requirements remain consistent. Data privacy, audit readiness, certification tracking.

What changes is how difficult it becomes to meet them.

In fragmented systems, data is spread out. Access controls differ. Audit trails are incomplete in parts.

In one enterprise setup, audit preparation required to pull data from multiple systems. Each needed validation. The process worked, but it was slow and dependent on manual checks.

That introduces risks. Not because systems fail, but because alignment is partial.

A more structured system reduces dependency. It does not remove risk. But it makes it easier to manage.

At this stage, the shift from procurement to ecosystem design is no longer strategic. It becomes operational.

How MITR Learning and Media Supports Structured EdTech Ecosystem Design

As organizations begin to shift toward ecosystem thinking, the challenge is rarely about adding new platforms. It is about making existing systems work together in a more defined way.

MITR Learning and Media operates in this space, focusing on enterprise digital learning through system-level alignment.

In one engagement, an institution approached MITR for content updates. The work expanded into mapping system dependencies, aligning reporting structures, and improving how data moved across platforms.

The initial requirement was narrow. The underlying issue was structural.

Work in this area often includes:

- Understanding how existing systems interact before introducing changes

- Supporting integration approaches that reflect long-term system behavior

There are also cases where the focus is not on adding anything new, but on simplifying what already exists.

That kind of intervention does not sit at the tool level. It connects decisions across architecture, governance, and execution.

The Shift from Procurement to Ecosystem Architecture Happens Gradually

Organizations do not move from procurement to ecosystem architecture in a single step. The shift happens in parts.

Data alignment. Governance adjustments. Vendor consolidation.

Over time, these begin to connect.

Systems behave more predictably. Reporting stabilizes. Costs become easier to understand.

The structure does not become simpler. But it becomes clearer.

And that clarity is what supports long-term decisions in enterprise digital learning environments.

It does not remove complexity, but it makes that complexity easier to work with. Systems begin to respond in more predictable ways, and decisions carry forward without needing to be reworked each time.

That is usually when learning environments start behaving less like a collection of tools and more like something intentionally designed.

Contact MITR Learning and Media to design a structured edtech ecosystem that aligns your systems, data, and governance from the ground up.

Frequently Asked Questions

What is learning engineering in corporate training?

Learning engineering combines learning science, data analysis, and engineering methods to design data-driven learning systems. These systems help organizations measure skill development and support capability growth beyond traditional course-based training.

How is AI used in enterprise learning and development?

AI helps learning teams study patterns in employee training activity. It can highlight skill gaps and suggest learning pathways for different roles. Many organizations also use AI tools to support workforce analytics and capability planning.

Will AI replace instructional designers?

AI will not replace instructional designers. Instead, it changes how they work. Designers now focus more on learning system design and experimentation. AI tools support analysis and content generation.

What skills do learning engineers need?

Learning engineers combine expertise in learning science, data analytics, and technology systems. They design learning architectures and analyze workforce capability data. Their work helps organizations optimize learning ecosystems.

How can organizations implement learning engineering?

Organizations begin by building workforce capability frameworks. They then integrate learning data across enterprise systems. Learning teams run experiments to evaluate training effectiveness. Analytics helps improve workforce capability development over time.

Organizations that adopt learning engineering gain clearer visibility into workforce readiness. They move beyond course completion metrics. Learning becomes a performance system.