Why AI Content Creation Still Struggles to Deliver Scalable Compliance Training

Enterprise learning teams are producing more training content than they did even two years ago. Generative AI has accelerated the earliest stage of course development. Draft compliance modules appear quickly. Updates to regulatory programs can be generated within hours rather than weeks.

Yet something curious happens after that initial burst of productivity. Content accumulates inside shared folders, authoring tools, and LMS staging environments. Deployment timelines remain almost unchanged. Regulatory training updates still require weeks to move through enterprise systems before employees actually see the final modules.

Senior L&D leaders often notice this disconnect quickly. Content creation was rarely the real bottleneck inside compliance programs. Validation cycles, governance structures, and deployment coordination usually determine how quickly training reaches the workforce.

Once AI begins generating content at scale, those operational layers become even more visible. The workflow that follows content creation begins to matter far more than the generation step itself.

Understanding where that friction appears is the starting point for any meaningful compliance training automation strategy.

Where SME Validation Slows Compliance Training Automation

Every enterprise compliance program depends on subject matter expertise. Regulations change frequently, and training content must reflect those updates precisely. Even when generative AI produces the initial course material, organizations rarely bypass SME verification.

Validation therefore becomes the first point where enterprise timelines stretch.

A financial institution updating anti-money laundering training recently generated hundreds of learning assets using AI-assisted tools. Draft creation took only a few days. The approval process, however, extended for several weeks because each module required legal verification, risk team approval, and operational review.

Patterns like this appear repeatedly in large compliance ecosystems.

Several validation challenges tend to surface:

-

- SMEs receive multiple versions of training modules from different teams working simultaneously.

- Regulatory training updates arrive while content is still under review, forcing reviewers to revisit previously approved sections.

- Experts responsible for validation usually balance several responsibilities, which slows feedback cycles.

- Review comments circulate through email threads and document versions, making it difficult to confirm which edits were finalized.

In practice, organizations discover that AI increases the volume of training content, but the validation system remains unchanged. The mismatch between production speed and review capacity becomes obvious.

Once that pressure appears, another operational issue surfaces almost immediately. Even after SMEs approve a module, learning teams still need to track which version ultimately reaches employees.

That challenge introduces the problem of version control.

Why Version Control in eLearning Breaks Under AI-Driven Content Production

Version control in eLearning has always required careful coordination. Generative AI simply exposes how fragile those systems can be when content volumes increase rapidly.

Most enterprise learning environments were designed for slower development cycles. Course updates moved through structured production phases. Authoring tools, review platforms, and LMS environments maintained a predictable sequence.

When AI begins producing training assets quickly, those structures often struggle to keep up.

Multiple Authoring Streams

Different departments frequently generate content simultaneously. Compliance teams might produce regulatory modules. HR groups create policy training. Regional offices adapt the same material for local regulations.

Without consistent version control practices, several variations of the same training module begin circulating across the organization.

Inconsistent Content Tracking

Version control in eLearning becomes complicated when teams rely on shared drives, email attachments, or manual tracking spreadsheets. Updates may appear correct inside one system while older versions remain active elsewhere.

During audits, learning leaders are sometimes asked a simple but difficult question: which version of the compliance training did employees actually complete?

Rapid Content Expansion

AI tools accelerate content generation significantly. What once required months of development can now appear within days. That increase in volume makes version tracking even more complex if governance mechanisms remain unchanged.

Once organizations encounter these challenges, the conversation begins shifting toward compliance exposure rather than operational inconvenience.

Regulatory oversight rarely tolerates ambiguity in training records. That reality introduces a deeper concern around compliance risk.

Regulatory Training Updates and the Risk of Deployment Gaps

Regulatory environments evolve constantly. New guidelines appear. Existing standards change. Organizations must update training content accordingly and ensure those updates reach the workforce quickly.

The difficulty is rarely the content itself. Learning teams typically respond to regulatory changes within days. The challenge lies in distributing updated training consistently across enterprise systems.

A manufacturing company responding to new workplace safety regulations recently experienced this issue. AI-generated updates were incorporated into existing safety modules almost immediately. However, deployment across global regions took months because local systems operated independently.

Eventually, an internal audit discovered that some locations were still running earlier versions of the training.

Situations like this create two common problems:

- Compliance leaders struggle to verify whether all employees have completed the same regulatory training updates.

- Audit teams spend considerable effort reconstructing which versions of training content were active at specific points in time.

Organizations facing these issues often begin reconsidering their overall learning infrastructure. Content generation alone does not resolve deployment complexity.

At this stage, many enterprises start exploring more structured workflows that connect content creation, validation, and deployment.

MITR Learning and Media often support organizations during this transition by helping them design learning ecosystems where regulatory updates, validation checkpoints, and deployment processes operate as a coordinated system rather than separate activities.

Once those structures begin forming, enterprises move closer to building scalable compliance with content pipelines.

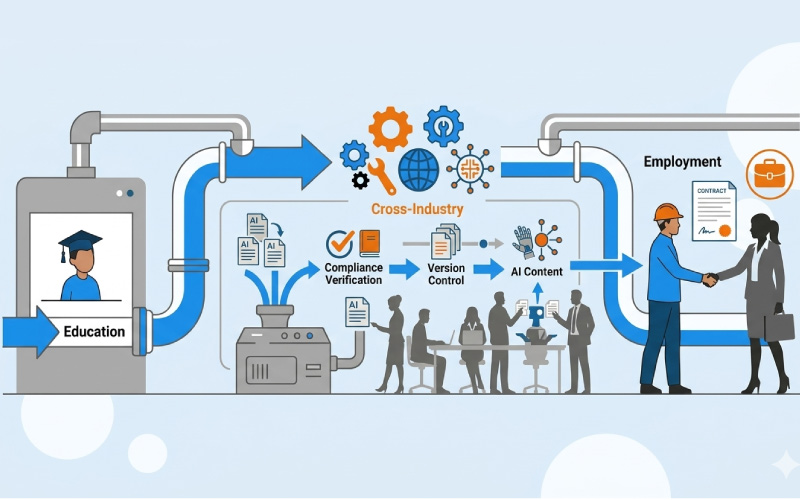

Building Structured Pipelines for Scalable Compliance Content

Organizations that stabilize their compliance training environments typically stop focusing exclusively on authoring tools. Instead, they examine the entire lifecycle through which learning content moves.

Compliance programs that operate efficiently tend to follow several operational patterns.

- AI-generated drafts move into structured validation workflows where SMEs review organized content rather than scattered files.

- Version control systems record every modification, allowing teams to track how regulatory training updates evolve over time.

- Deployment processes synchronize updated training modules across multiple learning platforms, so employees receive consistent content.

- Audit documentation automatically records which versions of employees completed and when those modules were deployed.

- Compliance leaders gain clearer visibility into the entire training lifecycle.

These workflows begin resembling software development pipelines more than traditional course publishing.

Learning teams generate material. Validation processes verify regulatory accuracy. Version tracking systems maintain content history. Deployment mechanisms distribute updates consistently across enterprise systems.

Organizations working with partners such as MITR Learning and Media often build these structured workflows gradually. Instead of treating compliance training automation as a single technology upgrade, they redesign the processes that move content from creation to enterprise deployment.

Generative AI will continue accelerating content creation. The enterprises that benefit most from that speed are usually the ones that strengthen the operational systems surrounding it.

When validation, version control, and deployment operate within a coordinated pipeline, scalable compliance content becomes far more achievable.

Frequently Asked Questions

What is learning engineering in corporate training?

Learning engineering combines learning science, data analysis, and engineering methods to design data-driven learning systems. These systems help organizations measure skill development and support capability growth beyond traditional course-based training.

How is AI used in enterprise learning and development?

AI helps learning teams study patterns in employee training activity. It can highlight skill gaps and suggest learning pathways for different roles. Many organizations also use AI tools to support workforce analytics and capability planning.

Will AI replace instructional designers?

AI will not replace instructional designers. Instead, it changes how they work. Designers now focus more on learning system design and experimentation. AI tools support analysis and content generation.

What skills do learning engineers need?

Learning engineers combine expertise in learning science, data analytics, and technology systems. They design learning architectures and analyze workforce capability data. Their work helps organizations optimize learning ecosystems.

How can organizations implement learning engineering?

Organizations begin by building workforce capability frameworks. They then integrate learning data across enterprise systems. Learning teams run experiments to evaluate training effectiveness. Analytics helps improve workforce capability development over time.

Organizations that adopt learning engineering gain clearer visibility into workforce readiness. They move beyond course completion metrics. Learning becomes a performance system.