Why Regional Skill Ecosystems Still Feel Disconnected Even When Activity Is High

In many regions, there is no shortage of activity. Training programs are running. Institutions are adding new courses. Governments are funding initiatives. Employers are part of discussions, at least at certain points.

And yet, when you step back and look at outcomes across the region, things do not fully line up.

Roles remain open longer than expected. People complete programs but still need retraining once hired. Some industries stay engaged; others drop off quietly after early involvement. Nothing looks broken in isolation. But taken together, the system feels slightly out of sync.

This is where the idea of regional skill ecosystems becomes less about structure and more about how different parts stay connected over time.

It usually starts with demand. Or at least, that is where most regions begin.

How Workforce Demand Mapping Actually Influences Decisions on the Ground

Most workforce development strategies rely on some form of demand mapping. Employers are asked what they need. Data is collected, reviewed, and shared across institutions and policymakers.

The expectation is simple. Better input should lead to better decisions.

That does happen, but only up to a point.

Patterns like this appear repeatedly in large compliance ecosystems.

What tends to get missed is how quickly these inputs lose relevance once they move through the system. Not because they were wrong, but because they were treated as something fixed.

In one region, hiring data showed steady growth in mid-level technician roles. Institutions responded by expanding related programs. Six to eight months later, companies had already adjusted their hiring mix, leaning toward fewer general roles and more specialized ones. The shift was not dramatic, but it was enough to make the earlier program changes feel slightly misaligned.

The issue was not the data itself. It was how it was used.

Some teams treat demand mapping like a checkpoint. Collect, validate, publish, and move on. But the reality is closer to this:

- The moment data is published, it begins to age

- Smaller changes, the kind that do not show up in reports, start affecting hiring first

- Employers rarely come back unless there is a structured reason to do so

- Decisions based on older inputs continue to move forward anyway

So even when the starting point is strong, the connection between demand and action starts to loosen.

And once institutions begin to act on this data, another layer of delay comes into play. Because responding to demand is not only about knowing what to change, but also about how quickly that change can happen.

Why Education–Industry Alignment Slows Down After the First Round of Changes

At the point where institutions begin adjusting programs, alignment still looks achievable. Input is available. Direction is clear enough. There is usually some urgency as well.

Then the pace drops.

Part of it comes from how institutions operate. Program changes require approval. Faculty readiness has become a factor. Infrastructure may not support immediate shifts. None of this is unexpected, but it adds time.

In one instance, an institute updated its curriculum to include newer tools used by local employers. The content itself was relevant. But only a few instructors were comfortable teaching it at first. Over time, delivery became uneven across batches, which affected how useful the program actually was for employers.

There are also smaller, less visible slowdowns:

- Industry input often arrives in the form of “what is needed,” but not “how it should be taught”

- Faculty development runs separately from curriculum updates, even though both are connected

- Changes are made in blocks, not in smaller, continuous adjustments

This is where education-industry alignment starts to weaken. Not because either side is disengaged, but because the way they work does not match the speed of change.

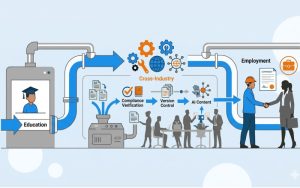

In some regions, this gap has been reduced by bringing in digital learning solutions that allow content updates without waiting for full program revisions. Modular formats, shared content libraries, and external inputs have helped institutions move a little faster.

MITR Learning and Media typically works within this space. Not by changing institutional systems entirely, but by supporting learning transformation strategy efforts that make updates easier to introduce and maintain over time.

Even then, alignment does not hold unless employers remain part of the process beyond initial input. That is where participation models start to matter more.

What Changes When Employers Stay Involved Beyond Initial Consultations

Employer involvement often begins with structured discussions. Inputs are gathered, expectations are shared, and there is a sense that alignment is possible.

The difference shows up later.

In regions where employer involvement continued beyond those early stages, the interaction looked less formal but more consistent. Instead of waiting for review cycles, some employers stayed connected through ongoing touchpoints.

In one case, a group of companies worked with a training provider to review small parts of a program every quarter. Not the full curriculum. Just specific modules tied to their immediate needs. The changes were not large, but they kept the program closer to what was actually required.

Elsewhere, employers contributed in different ways:

- offering short project assignments that reflected real work conditions

- giving feedback on learner performance after hiring, not just before training

- participating in smaller, more frequent discussions instead of large annual reviews

This kind of involvement does not scale easily without structure. That is where public-private skill partnerships come into play. They set expectations for continued engagement, rather than a one-time contribution.

MITR’s role in such environments often sits in the middle. Keeping communication active, making sure updates from one side reach the other in time, and aligning these inputs with broader enterprise digital learning efforts where needed.

Why Funding Structures Quietly Shape What Continues and What Stops

Funding is often seen as a starting condition. If resources are available, programs can be run. If not, they stall.

In practice, funding shapes behavior over time.

Short-term funding cycles tend to drive short-term outcomes. Programs focus on meeting targets that are tied to funding conditions. Enrollment numbers, completion rates, and immediate placements. These are easier to track and report.

But they do not always reflect on whether the ecosystem is working well.

In regions where funding was more balanced, combining public support with industry contribution, there was slightly more flexibility. Programs could adjust without waiting for new approvals. Updates could be introduced based on need rather than funding cycles alone.

Even then, certain patterns remain hard to avoid. For example, funding tied too closely to volume tends to push institutions toward scale, sometimes at the cost of relevance. At the same time, limited flexibility in how funds can be used makes it harder to respond to smaller, ongoing changes.

These are not structural failures. They are design choices that affect how the system behaves over time.

How Governance and Measurement Shift the Focus from Activity to Actual Outcomes

Most regional systems track what is easy to measure. How many learners entered programs. How many completed them. Sometimes how many were placed.

These numbers are useful, but they do not always reflect real alignment.

In one region, a shift was made in how outcomes were tracked. Instead of focusing only on placements, the system started tracking how long it took for learners to stabilize roles and whether they stayed beyond a certain period. The data was harder to collect, but it revealed something different. Some programs that looked successful earlier were not leading to stable employment.

That changed how decisions were made.

Measurement, in this sense, becomes part of governance. It influences what is reviewed, what is adjusted, and what continues.

A few shifts tend to make a difference:

- looking at outcomes over time, not just immediate results

- sharing data across institutions and employers instead of keeping it separate

- adjusting programs based on gaps that appear after placement, not just before

MITR Learning and Media often supports this layer by connecting learning data with employer feedback, helping move organizational learning transformation beyond isolated program success toward system-level improvement.

There is no single way to build a regional skill ecosystem that works everywhere. But certain patterns are becoming easier to recognize. Systems that stay connected, keep updating inputs, and that measure what actually matters tend to adjust better over time.

Others continue to operate with visible effort, but uneven results.

Over time, that gap becomes harder to ignore. Not because activity slows down, but because outcomes stop improving at the same pace. The system keeps moving, but not necessarily in the right direction.

This is where a more connected approach to learning, data, and employer input starts to matter more than individual program success.

Contact MITR Learning and Media to design and strengthen your regional skill ecosystem with aligned digital learning solutions and long-term workforce development strategy.

Frequently Asked Questions

What is learning engineering in corporate training?

Learning engineering combines learning science, data analysis, and engineering methods to design data-driven learning systems. These systems help organizations measure skill development and support capability growth beyond traditional course-based training.

How is AI used in enterprise learning and development?

AI helps learning teams study patterns in employee training activity. It can highlight skill gaps and suggest learning pathways for different roles. Many organizations also use AI tools to support workforce analytics and capability planning.

Will AI replace instructional designers?

AI will not replace instructional designers. Instead, it changes how they work. Designers now focus more on learning system design and experimentation. AI tools support analysis and content generation.

What skills do learning engineers need?

Learning engineers combine expertise in learning science, data analytics, and technology systems. They design learning architectures and analyze workforce capability data. Their work helps organizations optimize learning ecosystems.

How can organizations implement learning engineering?

Organizations begin by building workforce capability frameworks. They then integrate learning data across enterprise systems. Learning teams run experiments to evaluate training effectiveness. Analytics helps improve workforce capability development over time.

Organizations that adopt learning engineering gain clearer visibility into workforce readiness. They move beyond course completion metrics. Learning becomes a performance system.