In several universities, a program approved in one academic year is only delivered two cycles later. By that point, parts of the content are already dated. Not incorrect but no longer aligned with how work is being done in companies that hire these programs.

This gap is often discussed as a curriculum issue. It is usually assigned to design teams, or to a lack of industry input. That explanation feels reasonable at first, especially when looking at outdated course material.

But when the full timeline is examined, a different pattern starts to form. The delay is not introduced at the point of design. It builds gradually, across review steps, approvals, and delivery planning. By the time the program reaches learners, the context it was designed has already shifted.

That makes curriculum lag less of a design failure and more of a structural outcome.

How Program Timelines Move Slower Than Industry Change

Academic programs follow fixed schedules. They are tied to semesters, intake cycles, and institutional planning calendars. These structures exist for stability, and they serve that purpose well.

The issue begins when these timelines are compared to how industries operate.

In one case, a university introduced updates to its data engineering program, including changes to tools and workflows that were widely used at the time of design. The proposal moved through internal review over several months, then waited for the next academic cycle to be implemented. By the time students engaged with the updated content, some of the tools had already been replaced in enterprise environments.

The curriculum was accurate when it was written. It was simply out of sync when it was delivered.

This is not an isolated situation. It repeats in areas where tools and practices evolve continuously, such as AI, digital marketing, and product design. Small changes accumulate over time, and fixed academic cycles are not built to absorb those changes at the same pace.

As institutions try to reduce this gap, they often focus on increasing the frequency of updates. That helps in limited ways, but it does not address what happens once a program enters the approval system, where timelines tend to stretch further.

Why Approval and Accreditation Systems Extend These Timelines

Once a curriculum update is drafted, it moves through multiple layers of validation. Each layer has a defined role, and none of them are unnecessary.

Departments review academic quality. Committees ensure consistency across programs. Accreditation bodies check compliance with broader standards. These processes protect the system’s integrity.

At the same time, they introduce delays that are difficult to compress.

In one institution, a program of revision was held back because assessment formats needed to be standardized across departments. The content itself had already been developed and reviewed. What caused the delay was not the subject matter, but the need to align with institutional formats and policies.

This is where the structure begins to show its limits. Each review step works independently, and feedback often moves in sequence rather than in parallel. Even small changes can take months to move through the system, especially when different groups evaluate different aspects of the same program.

To make this more concrete, the contrast between academic and industry timelines can be mapped directly.

Program Update Timelines vs Industry Change Cycles

scroll right to read more

| Aspect | Academic Program Updates | Industry Change Cycles |

|---|---|---|

| Update frequency | Periodic (aligned with semesters or academic years) | Continuous, often incremental |

| Approval process | Multi-layered, sequential reviews | Decentralized, team-level decisions |

| Implementation timing | Fixed to academic calendar | Immediate or short-cycle deployment |

| Feedback loops | Formal, documented, time-bound | Informal, rapid, iterative |

| Impact of delay | Content becomes outdated at delivery | Teams adapt in real time |

The table does not suggest that one system is better than the other. It shows that they are built for different purposes. Academic systems prioritize consistency and validation. Industry systems prioritize speed and adaptation.

The difficulty arises when one is expected to match the pace of the other.

As programs move through these extended timelines, another layer of complexity becomes visible during delivery, where faculty members are expected to bridge the gap between documented curriculum and current practice.

Where Faculty Readiness Becomes a Constraint

Faculty capability is often assumed to follow curriculum updates. In practice, it does not always keep pace.

When programs are delayed, faculty members may find themselves teaching content that has already shifted in the industry. Even when updated material is available, familiarity with tools and workflows takes time to build.

In one business school, analytics modules were introduced into a core program. The curriculum included current platforms and techniques, but only a portion of the faculty had hands-on experience with them. Some adapted quickly, while others relied on older material or theoretical explanations.

This created uneven learning experiences across the same program.

The issue here is not a lack of intent or effort. It is a question of timing and exposure. Faculty development programs often run on separate schedules, and they do not always align with how quickly industry practices evolve.

At this point, institutions often look outward. They bring in industry experts, form advisory boards, or collaborate with external partners. These efforts are valuable, but they introduce another challenge when it comes to integrating industry inputs into formal curriculum structures.

Why Industry Inputs Are Difficult to Integrate into Curriculum

Industry feedback is usually immediate and specific. It reflects what is being used in real projects, often based on recent changes in tools or processes.

Academic systems, on the other hand, require structured and validated input before changes can be made. This difference in format creates friction.

An industry partner might highlight a shift in how a particular tool is being used in enterprise settings. Translating that into curriculum requires mapping it to learning objectives, assessment methods, and credit structures. This is not a quick process.

Even when institutions are aware of changes, acting on them within existing systems takes time.

Where The Disconnect Shows Up Most Clearly

Industry inputs often arrive as observations or recommendations, while academic changes require formal documentation and alignment across multiple stakeholders. What is clear in practice becomes complex when it needs to be standardized.

Short-term skill shifts are also difficult to embed in long-term program structures. By the time a change is formalized, the original context may have already evolved.

This is why many institutions begin exploring alternative approaches that allow them to respond faster without waiting for full program revisions.

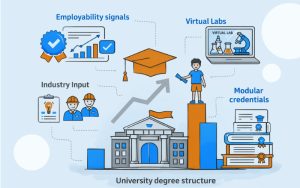

How Modular and Adaptive Curriculum Models Are Actually Being Used Inside Programs

By the time institutions reach this point, they are usually aware that full program revisions are not kept up. The question then shifts from how to redesign the curriculum to something more practical, which is where changes can be introduced without triggering the entire approval process again.

This is where modular structures start to appear, not as a formal strategy in most cases, but as a workaround that gradually becomes part of how programs operate.

Instead of reopening the entire degree for revision, smaller components are introduced within the existing structure. These may take the form of short modules, applied labs, or industry-linked sessions that sit inside a course but are not locked into the same update cycle as the rest of the curriculum.

In one engineering program, this showed up as a parallel track focused on digital tools. The main syllabus remained unchanged because it was already approved for the next academic cycle. What changed was how certain portions of the course were delivered. Faculty worked with updated material that reflected current tools, while the official curriculum documentation stayed as it was. Over time, some of these updates were absorbed into the formal structure, but not immediately.

What becomes noticeable in these cases is that flexibility is not created at the program level. It is created at the edges, in areas where institutions have some room to adjust without going back through every approval step.

This does not remove the underlying constraints. The main curriculum still moves at the same pace, and large changes still require the same level of validation. But it does allow parts of the learning experience to stay closer to what is happening in the industry.

It also explains why external partners begin to show up more frequently at this stage. Not because institutions are outsourcing curriculum design, but because they need a way to bring in current practices without restructuring the entire program each time something changes.

How MITR Learning and Media Works Within This Structure

MITR Learning and Media works with institutions that are trying to reduce curriculum lag without disrupting existing systems.

In one engagement, a university needed to update its technology curriculum but could not complete a full revision within the academic year. Instead, MITR supported the introduction of modular learning components that aligned with current industry practices. These components were mapped to existing courses, so they could be delivered without requiring immediate program-level approval.

In another case, faculty teams were supported with structured industry inputs that were already aligned with course objectives. This reduced the effort required to integrate external perspectives into teaching, while still maintaining academic consistency.

What makes this approach workable is that it does not attempt to bypass institutional processes. It works within them, identifying points where flexibility is possible, and using those to introduce updates.

Over time, some of these modular changes are absorbed into formal curriculum revisions. Others continue to operate as adaptive layers that keep programs relevant between major updates.

A Structural Limitation, not a Design Problem

Curriculum lag continues to be discussed as an issue of design or responsiveness. The evidence suggests that it is shaped more by governance structures and approval cycles.

Programs are delayed not because institutions are unaware of industry changes, but because the systems that manage those programs are built for stability. That stability comes with trade-offs.

Higher education reform 2026 conversations are beginning to reflect this shift in understanding. There is more focus on how approval systems can become more flexible, and how adaptive curriculum design can exist alongside traditional structures.

The problem is not hidden. It is visible in timelines, in delivery gaps, and in increasing reliance on parallel learning models.

What remains is how institutions choose to respond to it.

Some are beginning to adjust their structures. Others are still working within fixed systems, trying to manage change without altering how decisions are made. The difference is starting to show how quickly programs stay relevant.

MITR Learning and Media works with institutions to reduce curriculum lag through modular, industry-aligned learning models that fit within existing academic structures. Contact MITR Learning and Media to explore how your programs can stay aligned with evolving industry needs.

FAQ's

Why is accessibility essential to STEM education for students with special needs?

Accessibility to STEM eLearning means that all students (of both genders and with special needs) get to be partakers of learning programs. It's a step towards eliminating educational inequalities and fostering multiverse innovation.

In STEM education, what are some common problems encountered by students with special needs?

Some common issues are course format that is not complex, non-adapted labs and visuals, insufficient assistive technologies, and no customized learning resources. Besides this, systemic issues such as learning materials that are not inclusive, and teachers who are not trained.

How can accessibility be improved in STEM eLearning through Universal Design for Learning (UDL)?

Through flexible teaching and assessment methods, UDL improves accessibility in STEM content. Also, UDL allows learners to access and engage content in multiple ways and demonstrate understanding of content.

What are effective multisensory learning strategies for accessible STEM education?

Examples of multisensory learning strategies in accessible STEM include when students use graphs with alt-text, auditory descriptions of course materials, tactile models for visual learners through touch, captioned videos for auditory learners, and interactive simulations to allow boys and girls choice in how they have access to physical, visual, auditory, video and written content representation.

Identify the assistive technologies required for providing accessible STEM material?

In order to provide access to STEM material, technologies like screen readers, specially designed input app for mathematics, braille displays, accessible graphing calculators are required.

How can STEM educators approach designing assessments for students with special needs?

To create content for students with special needs, tactics such as creating adaptive learning pathways in more than one format, oral and project assessments and multiway feedback will prove to be beneficial.

What is the role of schools and policymakers in supporting accessible STEM education?

Educational institutions should focus on educating trainers and support staff, also they can invest in assistive technology, and work towards curricular policies.

Can you share examples of successful accessible STEM education initiatives?

Initiatives like PhET Interactive Simulations, Khan Academy accessible learning resources, Labster virtual laboratory simulations, and Girls Who Code’s outreach are examples of effective practice.

How can Mitr Media assist in creating accessible STEM educational content?

Mitr Media is focused on designing and building inclusive e-learning platforms and multimedia materials with accessibility standards in mind so that STEM material is usable by all learners at different levels of need.

What value does partner with Mitr Media bring to institutions aiming for inclusive STEM education?

Mitr Media has expertise in implementing assistive technology, enacting Universal Design for Learning, and providing ongoing support to transformation organizations, enabling their STEM curriculum into an accessible and interesting learning experience.